|

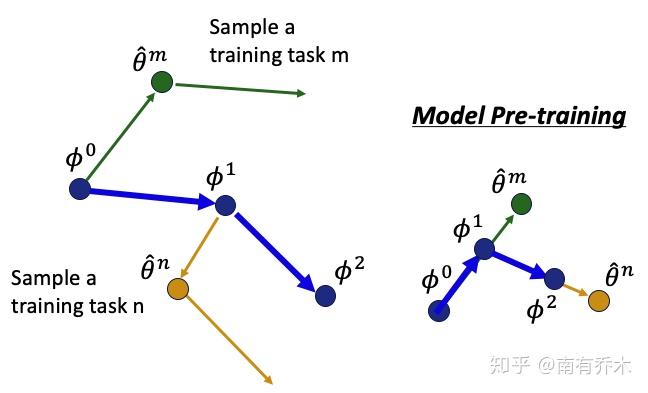

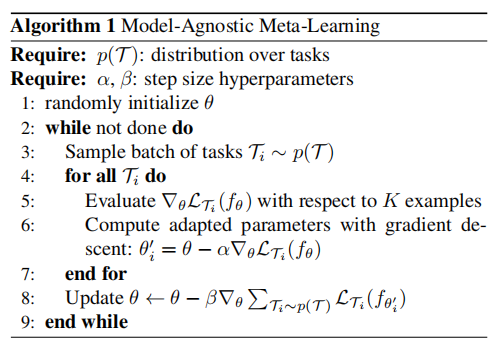

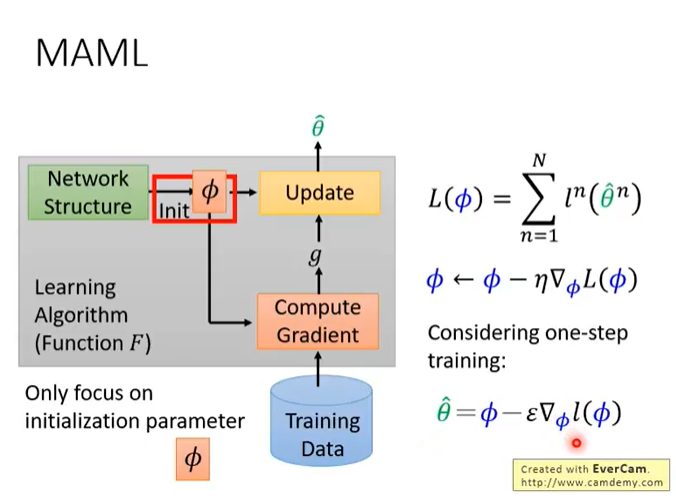

12/12/2023 0 Comments Maml meta learning We showĮmpirically that our proposed meta-learning method learns TSR with few dataįast and outperforms the baselines in 9 of 12 experiments. Measurements, heart-rate sensors, and electrical battery data. Finally, weĪpply the data to time series of different domains, such as pollution Global information of the time series to extract meta-features. Model-agnostic meta-learning (MAML) is a meta-learning technique to train a model on a multitude of learning tasks in a way that primes the model. To new short-history time series by modifying the original idea of ModelĪgnostic Meta-Learning (MAML) \cite, we propose a method forĬonditioning parameters of the model through an auxiliary network that encodes In this paper, we willĮxplore the idea of using meta-learning for quickly adapting model parameters Information across time series to improve learning. MAML is a meta-learning method that is applicable to various problems, such as regression, classification, and reinforcement learning 5. Therefore, it is important to make use of Meta-learning has been widely applied to solving few-shot reinforcement learning problems, where we hope to obtain an agent that can learn quickly in a new task. These models sometimes need a lot ofĭata to be able to generalize, yet the time series are sometimes not longĮnough to be able to learn patterns. “I'm very excited to try things out and I think it will be beneficial for the community,” he says.Download a PDF of the paper titled Multimodal Meta-Learning for Time Series Regression, by Sebastian Pineda Arango and 3 other authors Download PDF Abstract: Recent work has shown the efficiency of deep learning models such as FullyĬonvolutional Networks (FCN) or Recurrent Neural Networks (RNN) to deal with Taken from Chelsea Finn’s original research: MAML is a meta-learning algorithm that is compatible with any model trained with gradient descent algorithm and covers problems from classification, reinforcement learning (RL)and regression. The fact that LLaMA 2 is an open-source model will also allow external researchers and developers to probe it for security flaws, which will make it safer than proprietary models, Al-Dahle says. Learning to learn with hyperparameter optimization.

Nevertheless, Meta’s commitment to openness is exciting, says Luccioni, because it allows researchers like herself to study AI models’ biases, ethics, and efficiency properly. Meta says it did not remove toxic data from the data set, because leaving it in might help LLaMA 2 detect hate speech better, and removing it could risk accidentally filtering out some demographic groups. We propose a design for the tasks such that we leverage the original, more common scenario of few but long time series available. The company says it did not use Meta user data in LLaMA 2, and excluded data from sites it knew had lots of personal information.ĭespite that, LLaMA 2 still spews offensive, harmful, and otherwise problematic language, just like rival models. The present work introduces an extension of Meta-Agnostic Meta-Learning (MAML) and Multi-modal MAML (MMAML) to time series regression (TSR).

Al-Dahle says there were two sources of training data: data that was scraped online, and a data set fine-tuned and tweaked according to feedback from human annotators to behave in a more desirable way. Shared information is extracted via gradient descent and encoded as a set of meta-weights.

The extracted prior is then used for fast adaptation on previously unseen new tasks. 2 Related Work Meta-learning A surge of recent works have been devoted to developing theory and algorithms of MAML 2, 5, 13, 14, 17. In particular, we show that Sign-MAML has computational costs similar to those of FO-MAML while providing signicantly improved accuracy for few-shot tasks. This project is an implementation of Multimodal Model-Agnostic Meta-Learning via Task-Aware Modulation, which is published in NeurIPS 2019.Please visit our project page for more information and contact Shao-Hua Sun for any questions. Sign-MAML in computation efciency and accuracy. The model was trained on 40% more data than its predecessor. Among these methods, model-agnostic meta-learning (MAML) 8 was introduced to extract common knowledge shared among a group of related tasks. Multimodal Model-Agnostic Meta-Learning for Few-shot Classification.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed